We can ask Siri, Alexa or Google to tell us how our favorite team did last night or what the weather will be like tomorrow. Netflix and Hulu learn from what we watch, and suggest television shows and movies we’re likely to enjoy. And although it’s in its infancy, self-driving cars could revolutionize the way we travel. The impact of artificial intelligence (AI) is impossible to ignore. But despite the rapid advances in the application of AI, you won’t find AI technology running your lawyer’s office quite yet.

So what holds some legal professionals back? The short answer: there is no one singular reason, but instead a multitude of factors preventing lawyers from implementing AI as part of their practice—let alone using it to its full capacity.

The Slow Adoption of AI in the Legal World

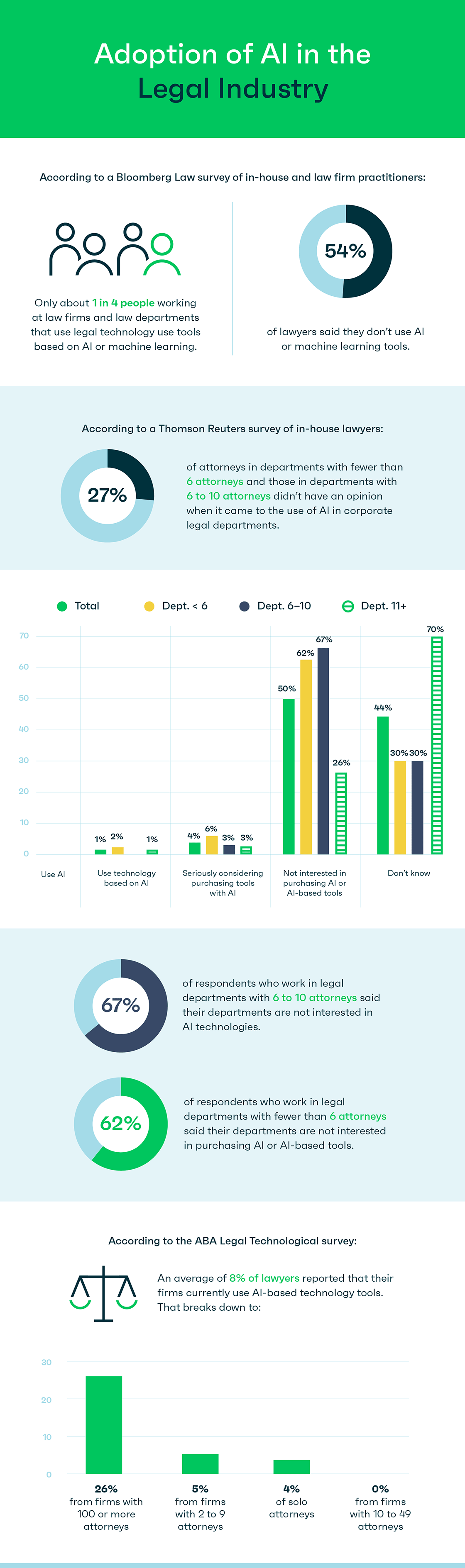

According to a 2019 Bloomberg Law survey, 54 percent of lawyers said they don’t use AI or machine learning tools. One quarter of the respondents lacked enough basic knowledge about AI to know whether or not they were using the technology in their practice.

One of the resolutions passed by the American Bar Association at its 2019 annual meeting deals explicitly with the issue of AI in the practice of law, which “urges courts and lawyers to address the emerging ethical and legal issues related to the usage of artificial intelligence (“AI”) in the practice of law including: (1) bias, explainability, and transparency of automated decisions made by AI; (2) ethical and beneficial usage of AI; and (3) controls and oversight of AI and the vendors that provide AI.” The resolution identifies some of the primary uses of AI in the law, including:

- Electronic discovery/predictive coding

- Litigation analysis/predictive analysis

- Contract management

- Due diligence reviews

- “Wrong doing” detection

- Legal research

- AI to detect deception

Despite the clearly defined benefits to law firms integrating AI into their workflow, the slow adoption rate of these technologies is likely to continue for a variety of reasons.

Reasons Why Law Firms Aren’t Jumping to Implement AI Initiatives

Reason number one for the relative absence of AI at firms? Widespread resistance to change within the legal profession—especially when it comes to technology. Many lawyers are deeply set in their ways and have what psychologist Carol Dweck refers to as a fixed mindset versus a growth mindset. In other words, they may be hesitant to view the challenges of AI as opportunities. In many ways lawyers are, generally speaking, a risk-averse group. Because they have such a substantial ethical obligation to their clients and the consequences of violating those obligations can be so severe, it is understandable why they avoid taking unnecessary risks. While not all lawyers are luddites, it is essential to understand the underlying reasons why law firms often take their time when it comes to technological advancements.

Cost, especially when coupled with the potential return on investment, plays a major role in whether or not a firm adopts AI-powered technology. According to the Bloomberg Law survey referenced above, more than half of respondents from in-house counsel offices said the chief barrier to adopting technology at a faster pace is lack of funding. At a time when law firms want to cut costs to stay competitive (or in some cases, to survive), making a case for a substantial up-front investment in AI technology may feel counterintuitive.

Even if a law firm chooses to invest in AI software, there is the matter of getting everyone to use it. The learning curve for AI tools can be steep, meaning attorneys may take years to get the hang of things (which is especially tricky considering AI technology is constantly changing). Factor in that most lawyers lack the free time to learn not only new software but an entirely new way of using technology as part of their daily workflow, and the obstacles of adopting AI become abundantly clear.

Another concern for law firms is a potential lack of understanding of what type(s) of AI technology most suit their practice. The fear of aggressive sales pitches for technology that overpromises and underperforms is real. Fortunately, people are working to address these concerns. In 2019, Duke University Law School worked with the Electronic Discovery Reference Model (EDRM) to develop and release a set of Technology-Assisted Review (TAR) Guidelines. The 50-page document, written for lawyers and the courts, goes beyond defining and explaining the AI-driven TAR process and discusses workflow applications and cost-related factors for law firms to consider. By educating the profession on AI legal issues and technology in a straightforward and practical way, law firms will have the information necessary to make more informed decisions.

Last but not least, many lawyers have very real—and very understandable—concerns about the ethics of AI in legal applications. AI systems, by definition, remove some level of human input and supervision. For instance, if a lawyer puts their trust in an AI application and it misses a crucial document or provision that human eyes may have caught, who is to blame? There are serious ethical implications relating to the use of AI in the law that still need sorting out—including diversity and inclusivity challenges as well as the security of confidential information—and it’s easy to see why nobody wants to be the test case. The ABA directly addressed this topic in its 2019 resolution on the use of AI in the practice of law, recognizing that AI ethics extend beyond professional ethics.

Conclusion

The above factors explain the primary barriers to entry for the use of AI in law. While some of the financial reasons generally apply to all industries, others are intrinsically unique to the practice of law. As we continue to identify and define the appropriate role of AI in the legal profession, the evidence on long-term cost savings and opportunity creation should lead lawyers to accept the use of AI in law and be open-minded to its many benefits.